オーバレイでメッセージをブロードキャストできるだけのものができた!

https://github.com/ryogrid/buzzoon/releases/tag/v0.0.3

試し方(サーバ起動)

ryogrid.net:9999

ryogrid.net:8888

に既にオーバレイネットワークに参加しているサーバが2匹いるのでどちらかに接続。

バイナリはWin環境なら buzzoon.exe、Linux環境なら buzzon_linux、Mac環境ならbuzzoon_mac

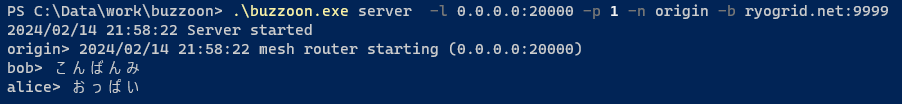

ローカルのサーバは下のように起動

$ .\buzzoon.exe server -l 0.0.0.0:20000 -p 1985 -n ryo -b ryogrid.net:9999

- -l オプションはバインドするローカルアドレス

- -p オプションは本来は公開鍵を指定するのだけど署名の検証とか未実装なので、他の人と被らなそうな10進の整数を指定すればおけ

- これはオーバレイNW上でのノードIDとして使われる

- -n オプションはニックネームの設定。Nostrでいう所のkind-0相当をまだ実装していないので、これで指定したニックネームでメッセージが送信されるようにしてある

- -b オプションはオーバレイネットワークに既に参加しているサーバのアドレスを指定。とりあえず ryogrid.netの2ノードのうちのどちらかを指定すればOK

メッセージの送信

上の例だと、http://127.0.0.1:20001/postEvent にRESTのエンドポイントが立っているのでそれを叩く。

(ポートは-lオプションで指定したものに+1)

curlで叩くなら下のような感じ。

$ curl -X POST -H "Content-Type: application/json" -d '{"Content":"こんばんみ"}' http://127.0.0.1:20001/postEvent

レスポンス↓

{

"Status": "SUCCESS"

}

受信したメッセージはサーバのstdoutに出る。

良かったら試してみてね!